AMD Releases Most Powerful AI Computing Accelerator

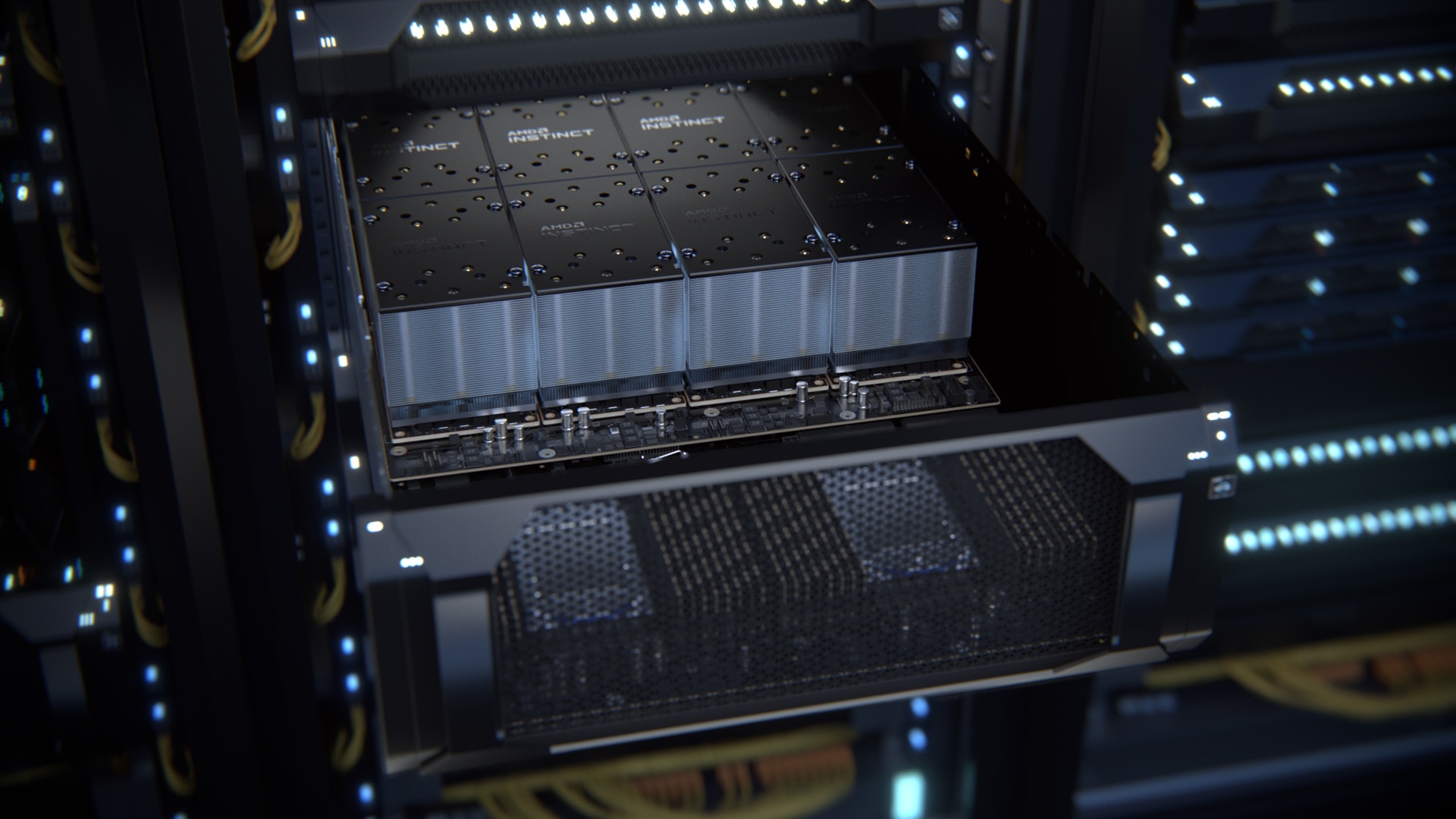

AMD has announced the new Instinct MI200 series of graphics accelerators designed for exaflops computing performance. The lineup includes the world's fastest model for HPC and AI environments, the AMD Instinct MI250X.

Built on the CDNA architecture, AMD Instinct MI200 Series models deliver 4.9 times faster performance than competing accelerators in double-precision floating-point (FP64) computing applications and more than 380 teraflops of theoretical power in FP16 computing for AI tasks, AMD said. The CDNA 2 architecture is the second generation of matrix cores accelerating FP64 and FP32 matrix operations, with theoretical FP64 performance 4 times faster than the company's previous generation GPU-based solutions.

The new accelerators leverage the industry's first multi-chip GPU design and 2.5D Elevated Fanout Bridge (EFB) technology, delivering 1.8x more cores and 2.7x more memory bandwidth than previous generation AMD graphics solutions, with an industry-leading 3.2 terabits per second peak performance. Up to eight Infinity Fabric channels connect Instinct MI200 accelerators to 3rd generation EPYC CPUs and other GPUs in the compute module to deliver maximum system throughput and better utilization of GPU accelerator power on the CPU side.

AMD's open-source ROCm software platform makes full use of Instinct accelerators. It provides support for computing environments with accelerators from different vendors, with different architectures. The Instinct MI250X and Instinct MI250 Accelerators are available as open hardware accelerator modules or in the OCP Accelerator Module (OAM) form factor. The Instinct MI210 accelerator will be available in a PCIe card form factor in OEM servers.

The MI250X accelerator is currently available in HPE's HPE Cray EX supercomputer, and more Instinct MI200 accelerators are expected to be installed in systems from AMD's major OEM and ODM partners in the enterprise segment, such as Asus, Atos, Dell Technologies, Gigabyte, Hewlett Packard Enterprise (HPE), Lenovo, Penguin Computing and Supermicro.

Source: amd