Smart Google microscope helps diagnose cancer

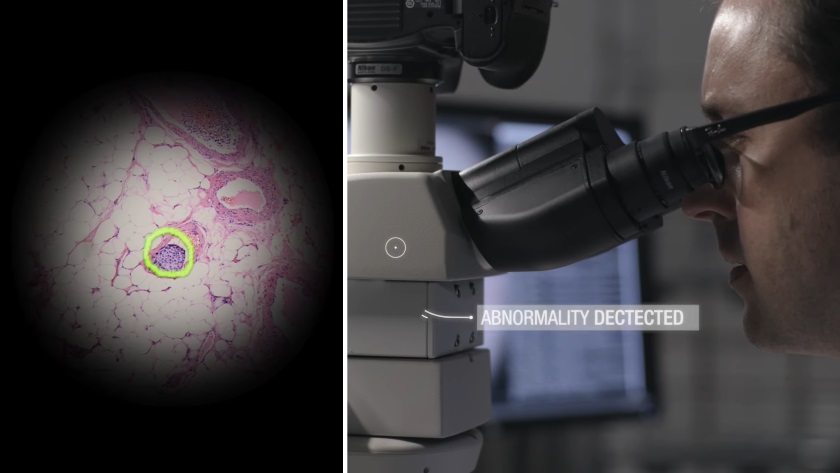

Google engineers introduced a prototype microscope "with augmented reality," which uses artificial intelligence to detect cancer cells in tissue samples.

How it works?

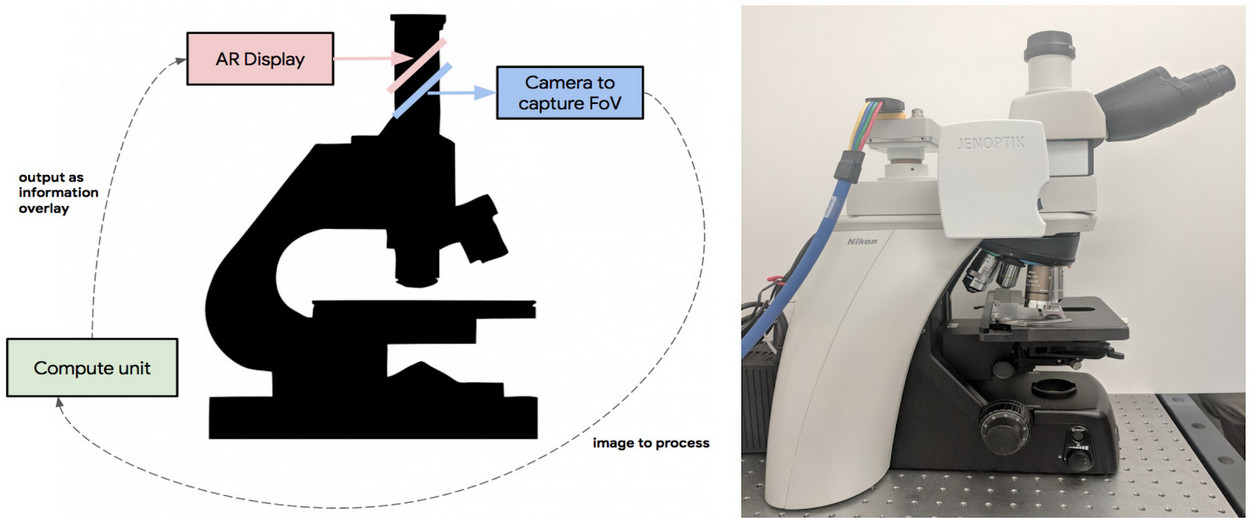

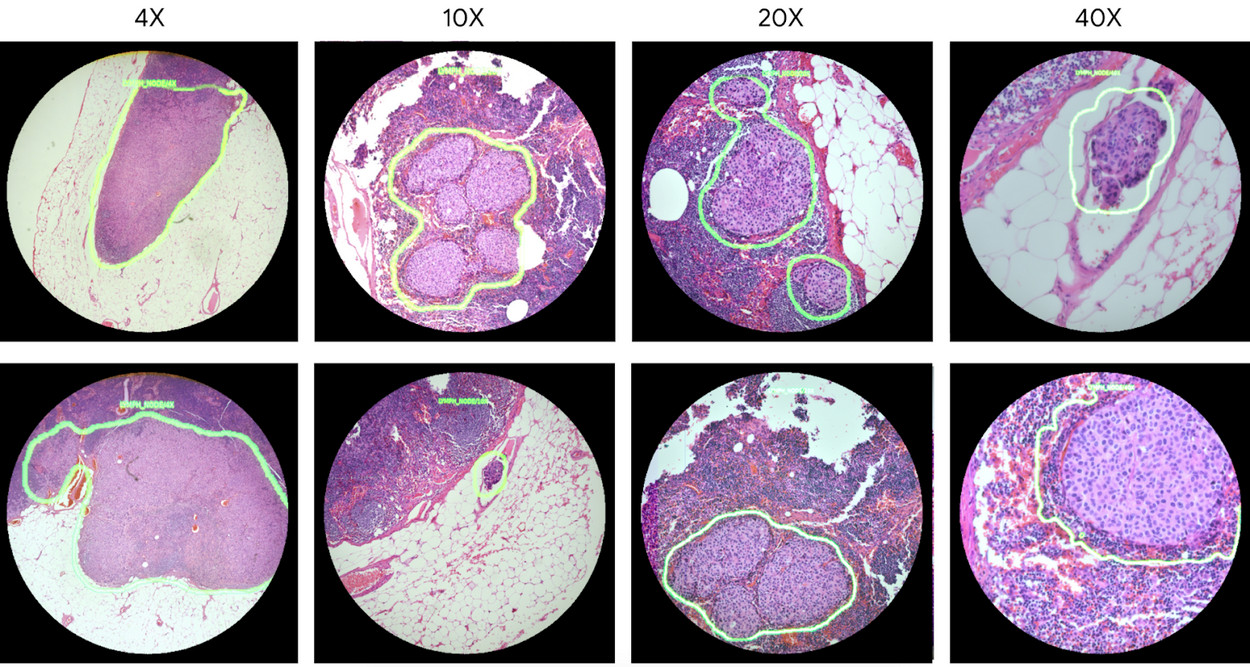

Developers of the search giant had enough to modify a conventional optical microscope. The area that is viewed in the eyepiece is fixed by the camera and sent to the neuronet to be eaten. They define pathological cells and use a transparent display to designate this area. On the augmented image, these places are immediately visible, which greatly facilitates the task of a specialist. According to the authors of the project, this approach is not inferior to traditional diagnostics by a professional pathologist in accuracy.

While experiments were conducted on systems trained to recognize prostate and breast cancer. Already now they operate in real time with a frequency of 10 frames per second. When you move the sample, the image is immediately scanned and the result is instantly displayed.

Why is it important?

Because not all laboratories can afford to purchase a digital microscope for early detection of cancer cells. It's much cheaper to integrate the camera and display into an "analog" model using the available components. How much such an upgrade can do is not reported.

What's next?

In Google they want to train their microscope to recognize other types of cancer, tuberculosis and malaria. There are no global plans for the introduction of new technology for the team yet - first you need to test everything.

Source: Google