Cisco releases open-source tool to verify where an AI model actually came from

Downloading an AI model from a public repository carries a risk most companies ignore: the model you get may not be the model described. Cisco has released Model Provenance Kit, a free, open-source Python tool that fingerprints AI models and traces their lineage — helping enterprises catch unauthorized modifications before a tampered model reaches production.

The timing matters. Hugging Face now hosts over two million models, and developers routinely copy, fine-tune, merge, and re-upload them without any formal audit trail. Research published jointly by Anthropic, the UK AI Safety Institute, and the Alan Turing Institute found that as few as 250 malicious documents are enough to implant a backdoor in a large language model, regardless of its size. Once a poisoned model is in your stack, tracing the source is nearly impossible without tooling built for it.

How it works

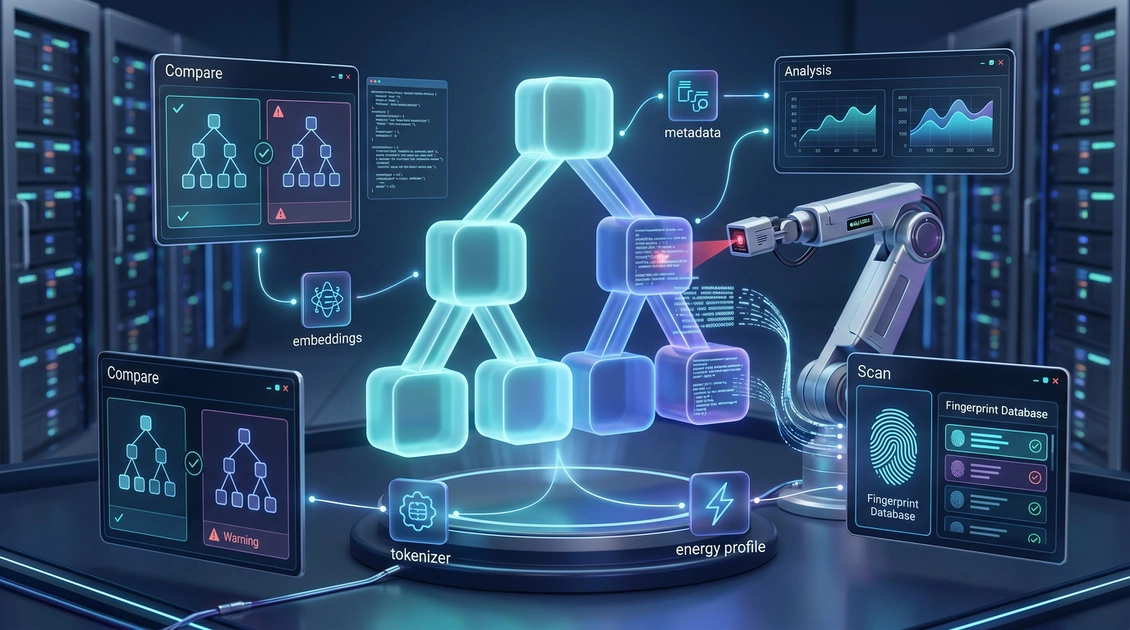

Model Provenance Kit doesn't just check file metadata — which is trivially forged. Instead it builds a composite fingerprint from tokenizer similarity, embedding geometry, normalization-layer characteristics, and energy profiles across the model's weights. That combination is far harder to spoof than a model card or a version tag.

The tool runs in two modes. Compare mode does a direct one-to-one check between two models, useful for confirming whether a downloaded model genuinely descends from a specific base version or has been silently altered. Scan mode runs a broader search against Cisco's fingerprint database, which currently covers around 150 base models across 45 families — ranging from 135 million to 70 billion parameters — and is hosted on Hugging Face. Even if a model's documentation has been stripped or rewritten, scan mode can reconstruct a plausible provenance chain.

On Cisco's own benchmark of 111 model pairs — covering distillation, quantization, and cross-organization fine-tuning scenarios — the kit hit 96.4% accuracy and 98.1% precision, per Help Net Security. Those numbers come from controlled lab conditions; real-world performance on more complex LoRA merge chains remains unvalidated.

The supply-chain gap

US enterprises pulling models from open repositories currently have no formal audit standard to follow. NIST has not yet issued specific guidance on open-weight model lineage, and FTC liability for downstream harms caused by a poisoned third-party model remains legally unclear.

Alongside the toolkit, Cisco published a Model Provenance Constitution — a formal framework that defines what counts as a derivative model and maps provenance obligations against OWASP LLM Top 10 and MITRE ATLAS. It's an attempt to set an industry baseline where none currently exists.

The kit is available now on GitHub. For any team deploying open-weight models in a regulated environment — finance, healthcare, legal — it's a practical starting point for building a defensible audit trail.